Do you understand the Video API?

This article describes what is the video API? How does the video API ensure smooth transmission of audio and video?

What is video API?

Video API is an interface dedicated to providing audio and video transmission services, mainly divided into two types: static video API and live video API.

Static Video API

Static Video API is an API that provides video file play services. Service providers offer video file cloud storage and CDN distribution services and provide video services through protocol interfaces such as HTTP and RTMP.

For example, YouTube and Instagram use Static Video API.

Live Video API

For example, Live.me, Yalla, and Uplive use the Live Video API.

The static video API is easy to understand and pulls video files from the cloud through the streaming protocol.

The live video API is more complicated. How does it ensure the video can be transmitted to the other end quickly, smoothly, and clearly?

In this article, we will introduce the logic behind the live video API in detail.

What Can The Video API Do?

The live video API application is becoming more extensive with the continuous improvement of network bandwidth and device performance. It makes many scenarios possible, such as:

- Live

- Online education

- Video conferencing

- Telemedicine

- Video calls

- Multiplayer

What Happens After The Video API?

For the live video API, it is necessary to ensure that the video data can complete the end-to-end transmission within 500ms and, simultaneously, guarantee the clarity of the video picture and the flow of the call.

Therefore, the live video API needs to ensure that the audio and video data can realize end-to-end data transmission in a fast, large and stable manner. A complex system is required to ensure the availability of the live video API.

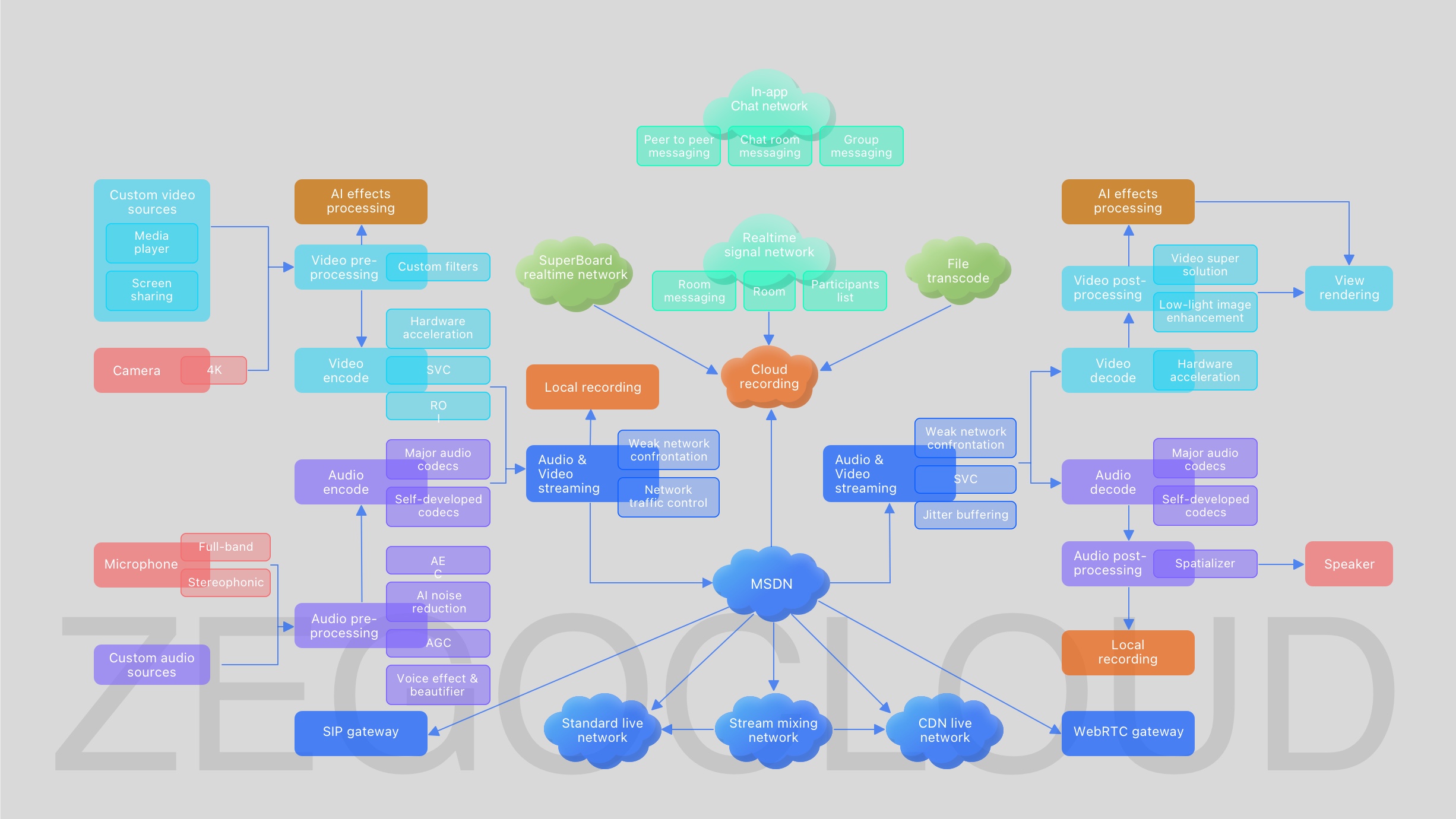

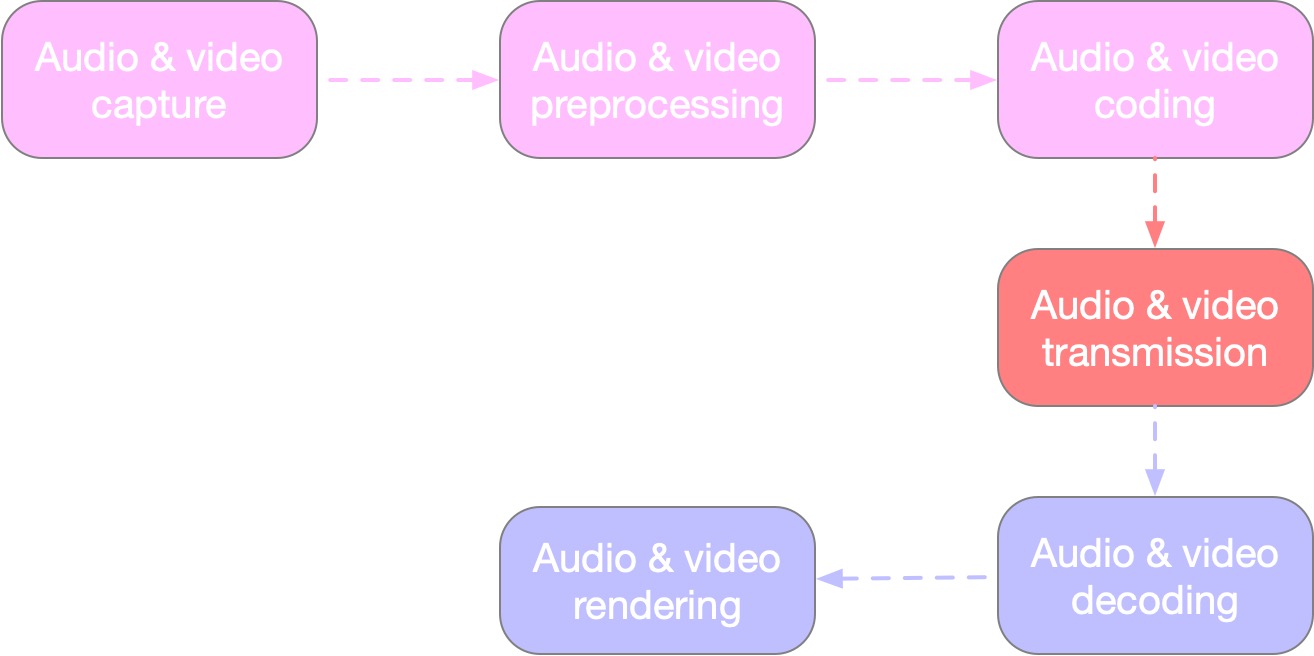

As shown in the figure, the live video API mainly layered the functions of 6 modules:

1. Audio and video capture

Audio and video capture is the source of audio and video data collected via cameras, microphones, screens, video files, recording files, and other channels.

It involves using color spaces, such as RGB and YUV, and extracting audio features, such as sampling rate, number of channels, bit rate, audio frame, etc.

2. Audio and video preprocessing

The audio and video preprocessing are mainly for business scenarios, and the collected data is processed once and again to optimize the customer experience.

For example:

- Video data processing for beauty, filters, special effects, etc.

- Audio data processing includes voice change, reverberation, echo cancellation, noise suppression, volume gain, etc.

3. Audio and video encoding

Audio and video coding ensures that audio and video data can be transmitted quickly and safely on the network.

Commonly used encoding formats are Video encoding format: H264, H265 Audio coding format: OPUS, AAC.

4. Audio and video transmission

Audio and video transmission is the most complex module in the video API. To ensure that the audio and video data can be transmitted to the opposite end quickly, stably, and with high quality in a complex network environment, streaming protocols such as RTP, HTTP-FLV, HLS, and RTMP can be used.

Various anomalies such as data redundancy, loss, out-of-order, flow control, adaptive frame rate, resolution, jitter buffering, etc., must be resolved using multiple algorithms.

So whether video API is worth choosing, we need to focus on whether the manufacturer has outstanding audio and video transmission advantages.

5. Audio and video decoding

Audio and video decoding means that after the audio and video data is transmitted to the receiving end, the receiving end needs to decode the data according to the received data encoding format.

There are two video decoding methods, hardware decoding, and software decoding. Software decoding generally uses the open source library FFMpeg. Audio decoding only supports software decoding; use FDK_AAC or OPUS decoding according to the audio encoding format.

6. Audio and video rendering

Audio and video rendering is the last step in the video API processing process. This step seems to be very simple. You only need to call the system interface to render the data to the screen.

However, one must process much logic to align the video picture with the audio. Still, now there is a standard processing method to ensure the synchronization of the audio and video.

How to use ZEGOCLOUD video API

Building a complete set of real-time audio and video systems is complex work. Nevertheless, many Video APIs help us solve the underlying complex operations. We only need to focus on the upper-level business logic.

The following will introduce how to use the ZEGOCLOUD Video API to implement the video calling function.

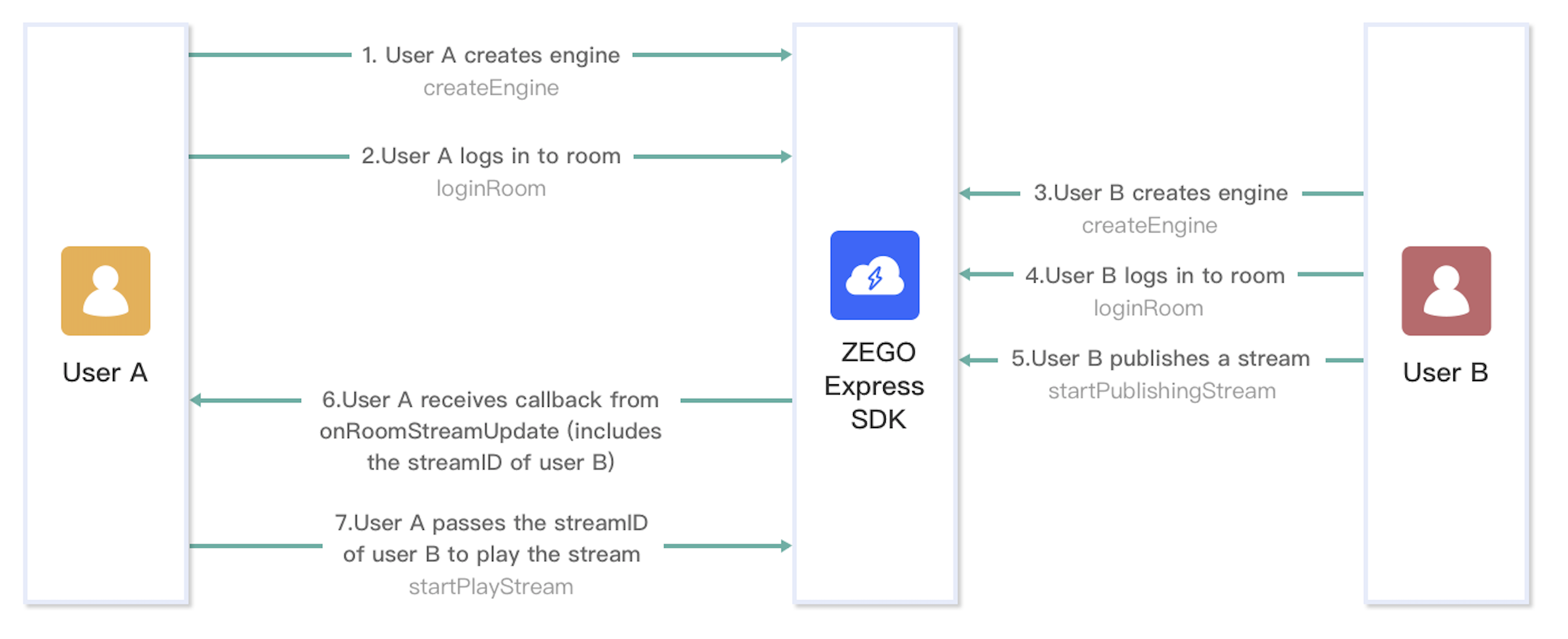

1. Implementation process

The following diagram shows the basic process of User A playing a stream published by User B:

The following sections explain each step of this process in more detail.

2. Optional: Create the UI

Before creating a ZegoExpressEngine instance, we recommend you add the following UI elements to implement basic real-time audio and video features:

- A view for local preview

- A view for remote video

- An End button

3. Create a ZegoExpressEngine instance

To create a singleton instance of the ZegoExpressEngine class, call the createEngine method with the AppID of your project.

4. Log in to a room

To log in to a room, call the loginRoom method.

Then, to listen for and handle various events that may happen after logging in to a room, you can implement the corresponding event callback methods of the event handler as needed.

5. Start the local video preview

To start the local video preview, call the startPreview method with the view for rendering the local video passed to the canvas parameter.

You can use a SurfaceView, TextureView, or SurfaceTexture to render the video.

6. Publish streams

To start publishing a local audio or video stream to remote users, call the startPublishingStream method with the corresponding Stream ID passed to the streamID parameter.

Then, to listen for and handle various events that may happen after stream publishing starts, you can implement the corresponding event callback methods of the event handler as needed.

7. Play streams

To start playing remote audio or video stream, call the startPlayingStream method with the corresponding Stream ID passed to the streamID parameter and the view for rendering the video passed to the canvas parameter.

You can use a SurfaceView, TextureView, or SurfaceTexture to render the video.

8. Stop publishing and playing streams

To stop publishing a local audio or video stream to remote users, call the stopPublishingStream method.

If local video preview is started, call the stopPreview method to stop it as needed.

To stop playing a remote audio or video stream, call the stopPlayingStream method with the corresponding stream ID passed to the streamID parameter.

9. Log out of a room

To log out of a room, call the logoutRoom method with the corresponding room ID passed to the roomID parameter.

Sign up with ZEGOCLOUD, get 10,000 minutes free every month.

Did you know? 👏

**Like**, **Follow**, **share** is the biggest encouragement to me

**Follow me** to learn more technical knowledge

Thank you for reading :)

Learn more

This is one of the live technical articles. Welcome to other articles: